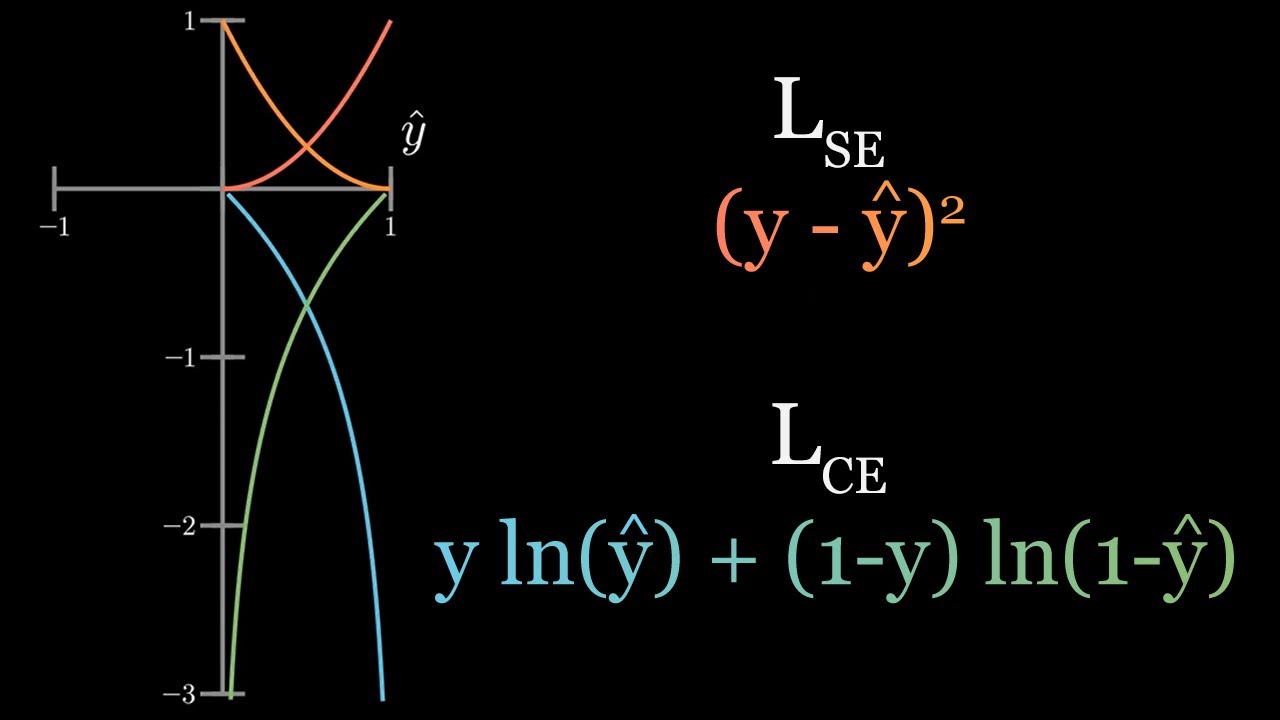

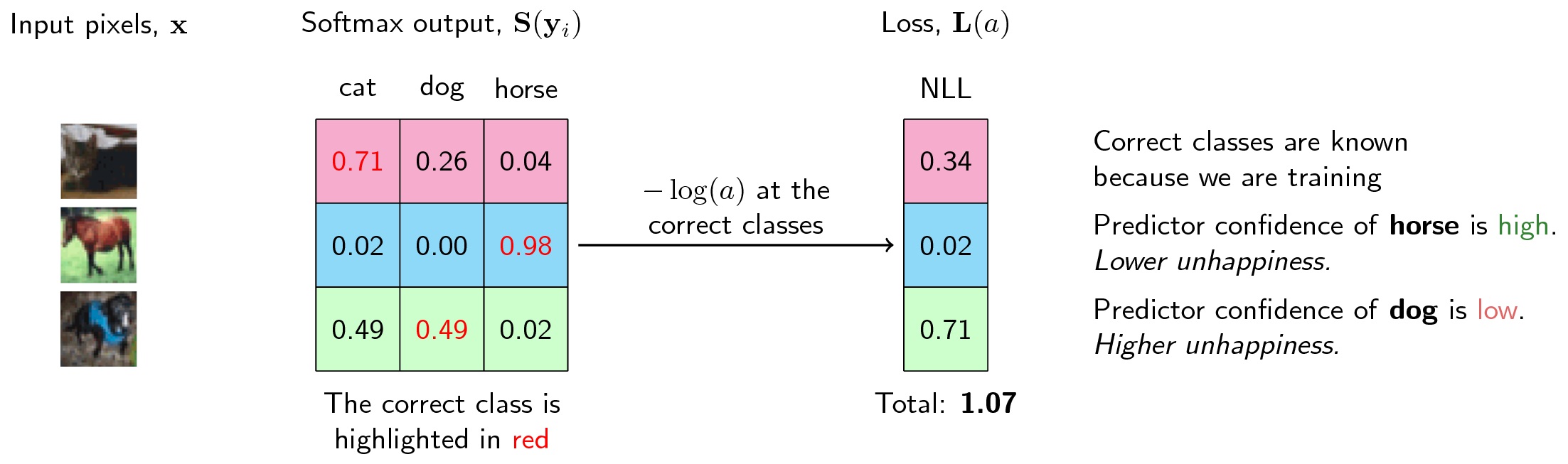

""" def _init_ ( self, num_classes, eps = 0.1, use_gpu = True, label_smooth = True ): super ( CrossEntropyLoss, self ). Since cross-entropy loss assumes the feature dim is always the second dimension of the features tensor you will also need to permute it first. label_smooth (bool, optional): whether to apply label smoothing. 1 Answer Sorted by: 4 You can compute multiple cross-entropy losses but you'll need to do your own reduction. use_gpu (bool, optional): whether to use gpu devices. Args: num_classes (int): number of classes. The reasons why PyTorch implements different variants of the cross entropy loss are convenience and computational efficiency. Usually, when using Cross Entropy Loss, the output of our network is a Softmax. When :math:`\eps = 0`, the loss function reduces to the normal cross entropy. import torch.nn as nn sizeaverage and reduce are deprecated reduction. math:: \begin where :math:`K` denotes the number of classes and :math:`\eps` is a weight. Public Member Functions Public Attributes List of all members. By default, the losses are averaged over each loss element in the batch. softmax activation function + cross-entropy loss function is often used, because cross-entropy. With label smoothing, the label :math:`y` for a class is computed by. This criterion computes the cross entropy loss between input logits and target. Rethinking the Inception Architecture for Computer Vision. Module ): r """Cross entropy loss with label smoothing regularizer. Some designs are better than others.Class CrossEntropyLoss ( nn. When designing a house, there are many alternatives. This applies only to multi-class classification - binary classification and regression problems have a different set of rules. To summarize, when designing a neural network multi-class classifier, you can you CrossEntropyLoss with no activation, or you can use NLLLoss with log-SoftMax activation. With the older NLLLoss technique, the raw output values will be log of SoftMax so if you want to view probabilities you must apply the exp() function. When making a prediction, with the CrossEntropyLoss technique the raw output values will be logits so if you want to view probabilities you must apply SoftMax. When using the older approach for multi-class classification, you apply LogSoftmax to the output and NLLLoss assumes you’ve done so. In short, when using the newer and simpler approach for multi-class classification, you don’t apply any activation to the output and then CrossEntropyLoss applies log-SoftMax internally. Loss_func = T.nn.NLLLoss() # assumes LogSoftmax() applied # z = T.log_softmax(z, dim=1) # function instead of Module # z = T.log(T.softmax(z, dim=1)) # inefficient Self.apply_log_soft = T.nn.LogSoftmax(dim=1) # Module This CrossEntropyLoss with logits output (logits just means no activation applied) technique is really just wrapper code around the older NLLLoss with LogSoftmax technique. Optimizer = T.optim.SGD(net.parameters(), lr=lrn_rate) The results are identical.Ī possible 4-7-3 network definition, and associated training code looks like: The demo run on the right uses NLLLoss with LogSoftmax activation on the output nodes.

The demo run on the left uses CrossEntropyLoss with no activation on the output nodes. The CrossEntropyLoss with logits approach is easier to implement and is by far the most common approach. Suppose you are looking at the Iris Dataset, which has four predictor variables and three classes. If you are designing a neural network multi-class classifier using PyTorch, you can use cross entropy loss (torch.nn.CrossEntropyLoss) with logits output (no activation) in the forward() method, or you can use negative log-likelihood loss (torch.nn.NLLLoss) with log-softmax (torch.LogSoftmax() module or torch.log_softmax() funcction) in the forward() method.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed